Service Bus Queues

Azure Service Bus queues offer asynchronous messaging as a (PaaS) service. This means that someone can put messages on a queue and someone can consume them. Wouldn’t be much value from the service otherwise but it is important to understand that we are only talking about temporary message storing. For permanent storage you should not use queues. They can handle great scale compared to traditional synchronous requests. For instance you may not need the machine park for serving the maximum number of simultaneous requests but you may allow messages to be stacked up in the queue and processed during time as long as you can handle them over-time and the queue does not fill-up.

Cross platform (almost)

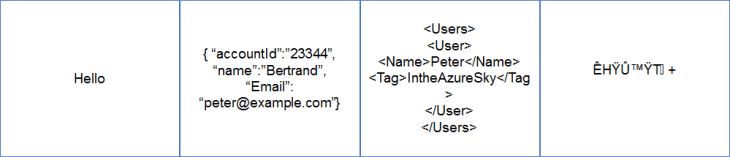

Messages are unstructured

One of the most difficult thing for people to comprehend with service bus messages are that the messages are not forcibly structured. If I have access I can post messages with nonsense data and I will succeed as there is no XSD format validation or similar when you post the messages.

It is therefor important to understand that you may get data quality related issues when you try to interpret the messages.

Dealing with currupt messages

to consume a message, the message gets automatically put in the dead messages queue. Note: This value can be set/changed both in the portal and through code.

to consume a message, the message gets automatically put in the dead messages queue. Note: This value can be set/changed both in the portal and through code.BrokeredMessage message = queueClient.Receive(TimeSpan.FromSeconds(5));

if (!ValidateMessage(message))

message.DeadLetter(); //The message is corrupt send to DeadLetter

Security/Shared Access Secret Token

TCP vs HTTP Binding vs AMQP

ServiceBusEnvironment.SystemConnectivity.Mode = ConnectivityMode.AutoDetect;

ServiceBusEnvironment.SystemConnectivity.Mode = ConnectivityMode.Http;

ServiceBusEnvironment.SystemConnectivity.Mode = ConnectivityMode.Https;

ServiceBusEnvironment.SystemConnectivity.Mode = ConnectivityMode.Tcp;

value=”Endpoint=sb://pitas.servicebus.windows.net;SharedAccessKeyName=IWontTellYou;SharedAccessKey=IWontTellYOuThisEither;TransportType=Amqp” />

| Ports | If | Description |

| 80, 443 | REST API or .Net API with ConnectivityMode.Http or ConnectivityMode.Https or ConnectivityMode.AutoDetect (default) and TCP Ports below are not open. | Connects to the service bus through HTTPS/HTTP |

| 9350-9354 | For Relayed services and Brokered messaging (queues) with ConnectivityMode.TCP or fastest connection speeds with ConnectivityMode.AutoDetect | Connects to service bus through the fastest TCP communication protocol |

| 5671-5672 | Only for AMQP clients

|

These ports are used when communication with the AMQP protocol. |

Formats/Serialization

So when should you serialize as XML instead?

- You have an ancient version of your integration platform and don’t plan to upgrade (mind the expiration of support though, extended support is expensive and Microsoft is clearer and clearer propagating that they support the current version and one older version of each of their platforms. The trend is also auto upgrade to the highest version so that people will run on updated software so it might be time to upgrade. So don’t get to far behind in your system software.

- The posting application is older and cannot serialize JSON. But can they in that case create a SAS Token?

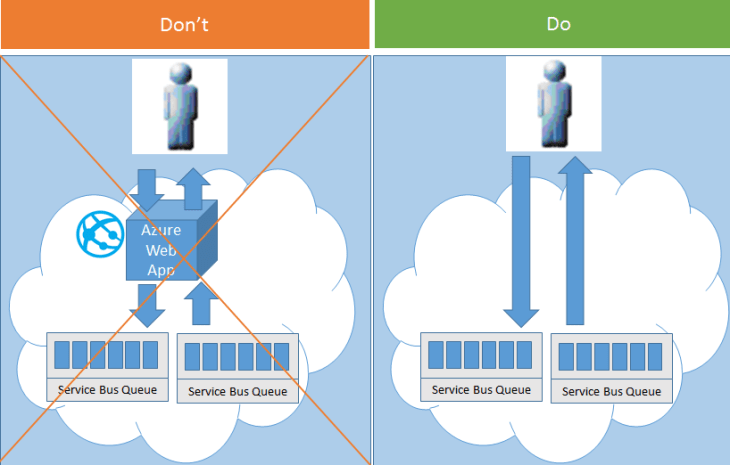

Don’t put a bottleneck in front of the service bus

An alternative to putting an extra layer in in front of the service bus one could to add some of local API component closer to the sender/receiver instead fronting the service bus with a commonly front to the service bus. You could also use your integration engine such as BizTalk to send/receive messages from/to the service bus from/to a source/destination that cannot speak directly with the service bus.

What to think about?

Shared access keys

I mentioned earlier that the shared access tokens are really safe. So that unless you have the secret key you cannot exploit the service bus. However if you have the key you can do whatever the access key has been configured to do. This fact dictates two important security measures you should take make it more difficult to exploit:

- Assign minimal rights to resources (read more about this in section Set up Minimum Access/Partner rights).

- Rotate the access keys. This means that you should periodically change the access key for the partner. Read up on how to do this in this section Shared Access Key rotation.

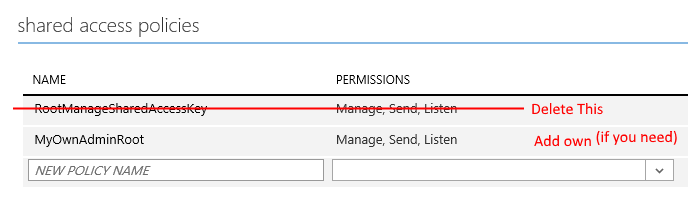

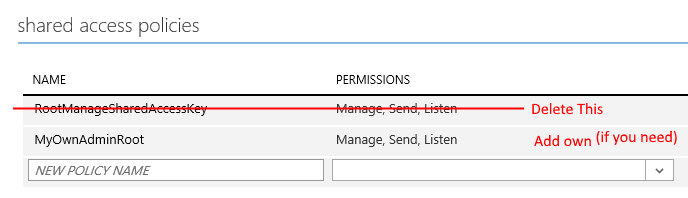

Set up Service Bus for Minimum Access

- First of all I would recommend removing the default RootManageAccessKey on the namespace and create a new account for managing your service bus. This would make it more difficult to gain access over your namespace as the offender would already know the SharedAccessKey and only have to hack your secret assuming he/she knows your namespace. So set up another manage account (different key) for managing the Service bus. Maybe I am a bit paranoid here- but it is a bit like not naming your computer administrator account Administrator.

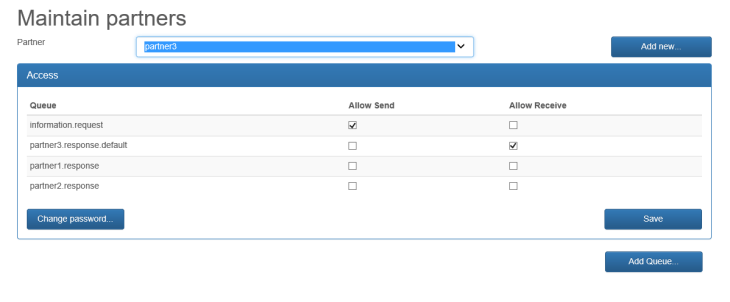

- Set up access on resources rather than namespace. This means that you in a common send/receive scenario would need to assign the partner (send) rights on a request queue (incoming requests) and (listen) rights on the response/receive queue. This (by default) forces the partner to create different tokens for sending and receiving messages (or connection strings that you probably call it when using the .Net API). If the partner has access to many queues and you have set up password rotation you can get into an administrative nightmare and may cause the partner some headaches in setting the right key to the right queue. I will discuss how I handled this problem later in the post for minimum access/single access key on multiple entities.

- Set up separate shared access keys for all(each) partners. This enables you to remove access to a certain partner without affecting others, it does not have to be company it may very be company/actor (like a system). You can also better monitor activity. For example if multiple partners shall send messages to the same queue then don’t give them the same key/secret for posting messages.

- If you have an integration engine that is going to consume messages, like BizTalk, you could perhaps use a key/secret placed on the namespace that BizTalk should use to simplify management. But that is basically the only scenario that I recommend that you add keys and secrets on the entire namespace.

| Function | Permission |

|---|---|

| Send message to queue | Send |

| Receive message from queue | Listen |

| Call Relay Service | Send |

| Register and serve relay service calls from WCF Service | Listen |

| Manage namespace | Manage |

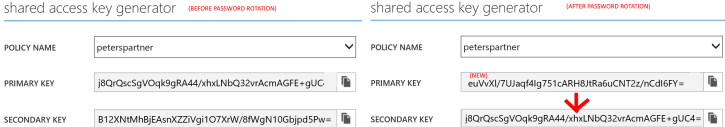

Shared access key rotation

As previously mentioned you should rotate your access keys. Microsoft did think about this when designing the product so each access key has two allowed secrets (one primary and one secondary).

/// Moves the primary key to the secondary key and sets a new primary key for the partner

/// </summary>

/// <param name=”partnerName”>The partnername to replace rights for</param>

/// <param name=”newPrimaryKey”>return value the new primary key</param>

/// <param name=”newSecondaryKey”>retur value for the new secondary key</param>

/// <returns></returns>

internal bool ChangeAccessKeys(string partnerName, out string newPrimaryKey, out string newSecondaryKey)

{

newPrimaryKey = “”;

newSecondaryKey = “”;

NamespaceManager namespaceManager = NamespaceManager.CreateFromConnectionString(string.Format(SB_CONNECTIONSTRING_TEMPLATE, SBNameSpace, ManagePolicy, ManagePolicyAccessKey));

string newPrimary = SharedAccessAuthorizationRule.GenerateRandomKey(); //Randomize new key to use for all instances

var allQueues = namespaceManager.GetQueues().ToList(); //Fetch all queues from namespace

foreach (var item in allQueues)

{

//For selected queue see if there is a SharedAccessKEy for the partner

var rule = item.Authorization.Where(r => r.ClaimType == “SharedAccessKey”

&& r.KeyName.Equals(partnerName, StringComparison.InvariantCultureIgnoreCase)).FirstOrDefault();

if (rule != null) //if was old rule then update it

{

SharedAccessAuthorizationRule sasRule = (SharedAccessAuthorizationRule)rule;

newSecondaryKey = sasRule.PrimaryKey; //Just the out variable

sasRule.SecondaryKey = sasRule.PrimaryKey; //Set secondary key to primary for this queue and “user”

sasRule.PrimaryKey = newPrimary; //Set the new primary key

namespaceManager.UpdateQueue(item); //Update Queue}

}

//No errors assume OK password change

newPrimaryKey = newPrimary;

return true;

}

At least once vs at most once – Message consumption pattern

- The message is consumed more than once – not good in your accounting or bank system for example. Here you may want to process the message at-most-once but write to a log if it fails to repair from the logs. If you catch an error you may want to move the message to another queue or the DeadMessage queue instead of letting it be consumed again so you can take action on it but to risk crediting a bank account multiple times is not OK, it is better to raise an exception.

- It does not matter much if the message is consumed multiple times. While it is not fun with multi-posts to your timeline in Facebook it may not matter so much in the long run. You could remove the extra post quite easily or possibly even detect it by looking at identical posts from the last 15 minutes. This is also an OK scenario if you have some sort of logging, GPS tracking or fire alarm detection system where the potential loss of data is worse than duplicates.

API

message = queueClient.Receive(); //Directly removes the message from the queue

//Do your stuff.

//If fails you must handle it if you want to retry like put message somewhere else

Authorization: SharedAccessSignature sr=your-namespace&sig=Fg8yUyR4MOmXfHfj55f5hY4jGb8x2Yc%2b3%2fULKZYxKZk%3d&se=1404256819&skn=RootManageSharedAccessKey

Host: your-namespace.servicebus.windows.net

Content-Length: 0

API

message = queueClient.Receive();

//Do your stuff.

message.Complete(); //Removes the message from the queue

Authorization: SharedAccessSignature sr=your-namespace&sig=Fg8yUyR4MOmXfHfj55f5hY4jGb8x2Yc%2b3%2fULKZYxKZk%3d&se=1404256819&skn=RootManageSharedAccessKey

Host: your-namespace.servicebus.windows.net

Content-Length: 0

//Do your stuff and use the message id + lock key from the broker properties to commit the message when successful, You could also use the location property for the DELETE

DELETE https://your-namespace.servicebus.windows.net/yourqueue/messages/31907572-1647-43c3-8741-631acd554d6f/7da9cfd5-40d5-4bb1-8d64-ec5a52e1c547?timeout=60 HTTP/1.1

Authorization: SharedAccessSignature sr=rukochbay&sig=rg9iGsK0ZyYlvhIqyH5IS5tqmeb08h8FstjHLPj3%2f8g%3d&se=1404265946&skn=RootManageSharedAccessKey

Host: your-namespace.servicebus.windows.net

Content-Length: 0

Handling rights on multiple resources

If you add Shared Access Policies through the portal they will get separate access secrets to all queues which is very secure but could be difficult to maintain, the portal assigns the same secrets to all the queues that the partner should be able to access. By having a tool like this you could also set up your other queue settings the way you want to without being a accustomed to and trained user in the portal. You can download a working samle of this related to this post (see beginning of post). Still separate acces keys offer better security but more problems when the keys needs to be updated.

Send Multiple messages in one go (Batch Sends)

If you are going to send multiple messages you can use the batch send functionality to send multiple messages, like for example CreateCustomer, CreateOrder that will happen simultaneous. You can send multiple messages in a batch which is more efficient than sending them individually. Once the service bus receives the messages it will split them to separate messages thereso it is very userfull if you can find a use-case for it.

Send multiple through API

QueueClient queueClient = QueueClient.CreateFromConnectionString(ConfigurationManager.AppSettings[“Microsoft.ServiceBus.ConnectionString”], “TestQueue”);List<BrokeredMessage> messageList = new List<BrokeredMessage>();

Microsoft.ServiceBus.Messaging.BrokeredMessage mes1 = new BrokeredMessage(“Msg 1”);

mes1.Properties.Add(“CustomerNo”, “1”);

messageList.Add(mes1);

Microsoft.ServiceBus.Messaging.BrokeredMessage mes2 = new BrokeredMessage(“Msg 2”);

mes2.Properties.Add(“CustomerNo”, “2”);

messageList.Add(mes2);

queueClient.SendBatch(messageList);

Send multiple through REST API

Authorization: SharedAccessSignature sr=your-namespace&sig=ASADADADADASASASADADAS&se=1404256819&skn=Sender

Content-Type: application/vnd.microsoft.servicebus.json

Host: your-namespace.servicebus.windows.net

Content-Length: 18

Expect: 100-continue[

{

“Body”:”Msg 1″,

“BrokerProperties”:{“Label”:”M1″},

“UserProperties”:{“CustomerNo”:”1″}

},

{

“Body”:”Msg 2″,

“BrokerProperties”:{“Label”:”M2″},

“UserProperties”:{“CustomerNo”:”2″}

}

]

Note that Content-Type shuld be set to application/vnd.microsoft.servicebus.json

Important: As far as I can find out the old limitations on the Batch POST that it cannot be more than 256k in Size is still valid (should any reader have any other information then please make a comment and send the link). So you cannot send huge batches.

BizTalk / Partitions

Broadcasting changes with Topics

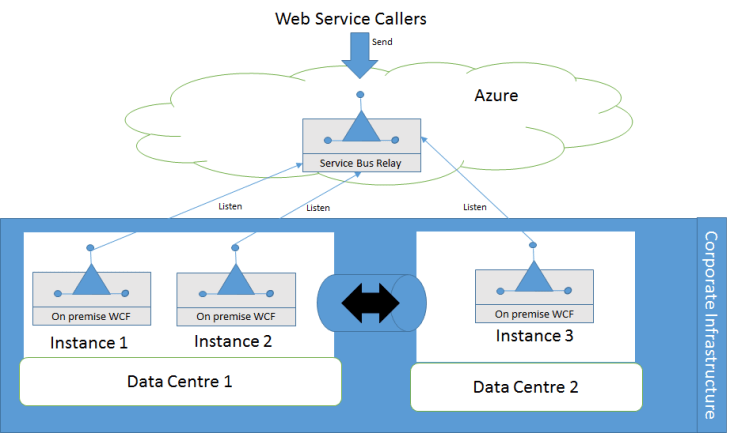

Synchronous integration with (most likely) on premise resources

High availability for Service Bus Relays

1. Add a retry functionality at the sender (caller) easilly done if you use BizTalk or other integration software for calling the relay)

2. Have multiple instances (ideally 3 or more if performance does not dictate more) of the Relay Service (WCF service on premise). If you have two data centres with different internet routing it could be wise to put one instance at the other location.

Avoid long-running relay services

As always when it comes to synchronous calls you should avoid long-running processes. For report creation and other time consuming services put the request on a queue handler (SB queue, MSMQ queue, Windows Service Bus) and have a background job creating the reports. You could then return “Report request submitted” directly and can have your service handling other incoming requests instead. This is not specific to Service Bus Relays but is a good architecture overall. Consider that the first version of Azure had Web Roles and Worker Roles. Web roles handled web requests and worker roles handled heavy/batch processing.